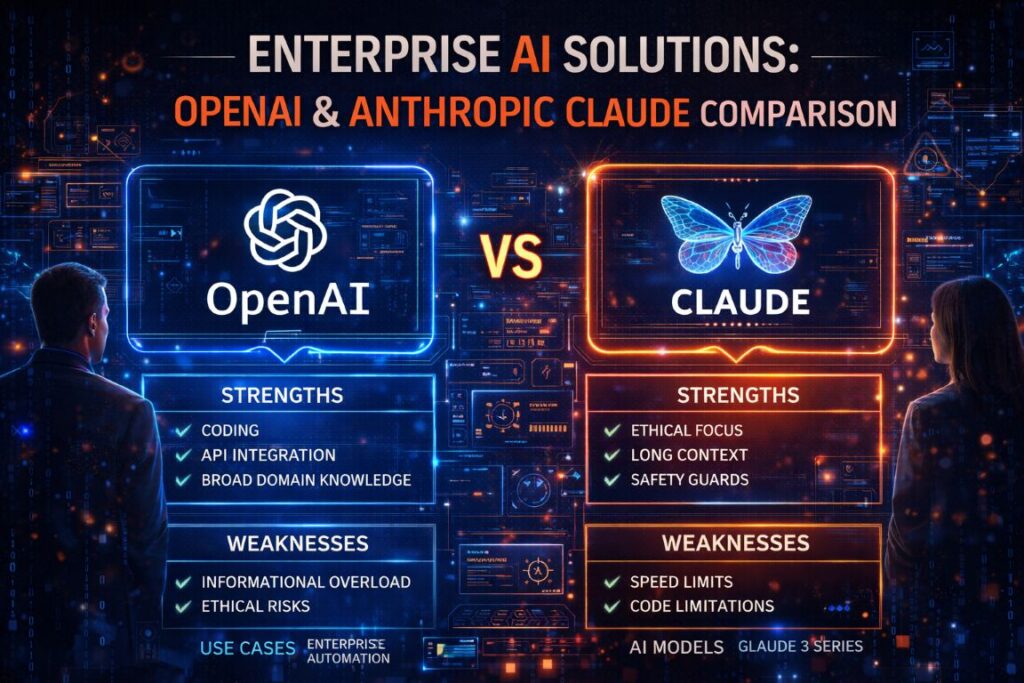

- Claude vs. ChatGPT is not a simple winner-takes-all decision — the right platform depends on your specific enterprise use case, infrastructure, and risk tolerance.

- Anthropic’s Claude leads on long-context processing, handling up to 1 million tokens, making it the stronger choice for legal review, codebase audits, and document-heavy workflows.

- OpenAI holds the multimodal advantage, supporting text, image, audio, and video — giving it an edge for customer-facing and creative enterprise applications.

- Most Fortune 500 enterprises are not choosing one platform — they are running both, with Claude and GPT-4o handling different workloads simultaneously.

- There is a critical difference in how each company approaches AI safety that directly impacts compliance, governance, and enterprise risk — and it goes deeper than most comparison articles reveal.

Choosing between OpenAI and Anthropic Claude is one of the most consequential infrastructure decisions enterprise technology teams are making right now.

If you work with large language models, you have almost certainly used a product from OpenAI or Anthropic — or both. OpenAI popularized the category with ChatGPT, which has hundreds of millions of weekly active users. Anthropic, founded in 2021 by former OpenAI researchers, has grown into the second-largest AI platform by enterprise adoption. Understanding both platforms is now a core competency for technology leaders. For enterprises navigating this decision, resources like enterprise AI solution providers are helping teams evaluate these platforms against real-world deployment criteria.

What is Anthropic and Why Does It Matter?

Anthropic is not just another AI company. It was founded with a specific thesis: that powerful AI and safe AI do not have to be in conflict. That founding philosophy shapes every product decision the company makes.

Founded by Former OpenAI Researchers With a Safety-First Mission

Anthropic was founded in 2021 by Dario Amodei (CEO), Daniela Amodei (President), and approximately ten other former OpenAI researchers who left over disagreements about the pace of safety research relative to capability development. That origin story is not just interesting history — it explains why Anthropic’s technical roadmap prioritizes interpretability, alignment research, and predictable model behavior in ways that differ fundamentally from its competitors.

Constitutional AI: The Framework Behind Claude’s Behavior

Anthropic trains Claude using a methodology called Constitutional AI. Rather than relying solely on human feedback to shape model behavior, Constitutional AI gives the model a set of explicit principles — a “constitution” — that guides how it evaluates and revises its own outputs. This approach gives Anthropic significantly more control over how Claude responds to sensitive, ambiguous, or high-stakes prompts.

The practical result for enterprise deployments is a model that produces outputs with measurably lower bias toward specific political or social positions, and one that is far less likely to generate harmful or misleading content under adversarial prompting conditions. For industries like legal, healthcare, and financial services, that level of behavioral consistency is not a nice-to-have — it is a compliance requirement.

Anthropic’s Enterprise Footprint: 44% Penetration and 8 of the Fortune 10

Anthropic has rapidly evolved from a safety research lab into an enterprise AI heavyweight. The company has achieved approximately 44% penetration among enterprise AI adopters, with Claude deployed across eight of the Fortune 10 companies. That level of adoption reflects something beyond marketing — it reflects that Claude performs on the specific tasks that matter most in large-scale enterprise environments: long-document analysis, complex reasoning chains, and code generation at scale.

What is OpenAI and What Does It Offer Enterprises?

OpenAI took a different path. Where Anthropic built deliberately toward enterprise trust, OpenAI built for reach — and then worked backward into enterprise. The result is a platform with an extraordinary breadth of capabilities, a massive developer ecosystem, and deep infrastructure partnerships that are difficult to match.

From ChatGPT to Enterprise: OpenAI’s Broad Market Reach

ChatGPT’s launch in late 2022 was the fastest consumer technology adoption in history at the time. OpenAI translated that momentum into enterprise products, including ChatGPT Enterprise, the Assistants API, and fine-tuning capabilities across the GPT-4o model family. OpenAI leads in chatbot deployment, enterprise knowledge management, and customer support use cases — categories where volume, speed, and multimodal flexibility matter more than deep context handling.

Multimodal Capabilities Across Text, Image, Audio, and Video

This is one of the clearest capability gaps between the two platforms. OpenAI supports text, image generation via DALL-E, real-time voice via the Advanced Voice Mode, and video understanding. Anthropic currently supports text and image input only — there is no native video or voice generation in the Claude model family.

For enterprises building customer-facing applications, interactive voice agents, or visual content workflows, that gap matters enormously. OpenAI’s GPT-4o can process a screenshot, describe it, respond to it verbally, and generate a follow-up image in a single API pipeline. Claude cannot replicate that end-to-end multimodal workflow today.

| Capability | OpenAI (GPT-4o) | Anthropic (Claude 3.5) |

|---|---|---|

| Text Processing | ✓ Yes | ✓ Yes |

| Image Input | ✓ Yes | ✓ Yes |

| Image Generation | ✓ Yes (DALL-E) | ✗ No |

| Voice / Audio | ✓ Yes (Advanced Voice Mode) | ✗ No |

| Video Understanding | ✓ Yes | ✗ No |

| Long Context Window | 128K tokens | 1 million tokens |

Microsoft Azure and Fine-Tuning Advantages for Enterprise Teams

OpenAI’s partnership with Microsoft Azure is one of the most significant enterprise infrastructure advantages in the AI industry. Enterprises already operating on Azure can access OpenAI models directly through Azure OpenAI Service, with enterprise-grade SLAs, private networking, data residency controls, and compliance certifications already baked in. Fine-tuning GPT-4o on proprietary datasets is also available through Azure, giving enterprise teams the ability to adapt the model to domain-specific language, formatting preferences, and behavioral requirements without building a model from scratch.

Claude vs. ChatGPT: Head-to-Head Model Capabilities

Both platforms are capable across a wide range of enterprise tasks, but treating them as interchangeable is a mistake that costs teams time, money, and accuracy. The differences are specific, measurable, and directly relevant to how you architect your AI workflows.

Claude 3.5 and GPT-4o are currently the flagship models from each company. Both handle complex reasoning, code generation, multilingual processing, and document analysis at a high level. But their architectural priorities diverge in ways that become apparent the moment you push either model into demanding, real-world enterprise conditions.

Long-Context Performance: Claude’s 1M Token Window vs. OpenAI’s Limits

Claude’s 1 million token context window is not a marketing figure — it is a fundamental architectural advantage for document-heavy enterprise workflows. To put it in practical terms, 1 million tokens is roughly equivalent to processing a 700-page legal contract, an entire software codebase, or several years of customer support transcripts in a single prompt. GPT-4o supports up to 128,000 tokens, which is substantial but falls significantly short for the most demanding long-context use cases.

Where this matters most is in legal document review, financial audit trails, large-scale codebase analysis, and compliance monitoring. Teams that have attempted to chunk large documents across multiple GPT-4o calls to work around the context limit report inconsistent reasoning across chunks — a problem that disappears when the entire document fits inside a single Claude prompt.

Coding and Data Analysis: Where Claude Has the Edge

Claude 3.5 Sonnet has consistently outperformed competing models on SWE-bench, a standardized benchmark for real-world software engineering tasks. In head-to-head evaluations, Claude demonstrates stronger performance on multi-file code edits, debugging complex logic errors, and producing clean, well-documented code with minimal prompting. For enterprise development teams running codebase audits, building internal developer tools, or automating QA pipelines, Claude’s coding performance is a meaningful differentiator.

Multimodal and Creative Tasks: Where OpenAI Leads

OpenAI’s GPT-4o is the stronger choice when your workflow involves anything beyond text and static images. Real-time voice processing, video frame analysis, and DALL-E image generation give OpenAI a complete multimodal stack that Claude simply does not match today. Enterprises building customer service voice bots, visual product search tools, or AI-generated marketing content will find GPT-4o’s end-to-end multimodal pipeline considerably more capable for those specific applications.

Budget Tier Pricing: OpenAI’s Cost Advantage at Scale

OpenAI’s GPT-4o mini offers one of the most cost-efficient inference options available for high-volume enterprise deployments. For tasks that do not require deep reasoning or long-context handling — classification, summarization, simple Q&A, routing logic — GPT-4o mini delivers strong performance at a price point that makes large-scale deployment economically viable.

Anthropic’s Claude Haiku is the comparable budget-tier option, and it performs well. However, OpenAI’s budget tier benefits from a larger fine-tuning ecosystem and more extensive documentation for cost optimization at scale. Teams running millions of API calls per day will find OpenAI’s pricing structure easier to optimize, especially when combined with Azure commitment tiers.

Cloud Ecosystem and Infrastructure Integrations

Where you run your AI models matters as much as which models you run. Both Anthropic and OpenAI have moved aggressively to embed their models into the cloud infrastructure that enterprises already operate on — but they have done so through different partnership strategies with meaningfully different implications for your deployment architecture. For more insights on the challenges and implications of these strategies, read about OpenAI’s risks and challenges.

The key question is not just which model performs better in a benchmark. It is which model fits most naturally into your existing cloud environment, security posture, and data governance requirements.

Claude on AWS Bedrock, Google Vertex AI, and Microsoft Foundry

Anthropic has built one of the most cloud-agnostic enterprise deployment footprints in the industry. Claude models are available natively through Amazon Web Services Bedrock, Google Cloud Vertex AI, and Microsoft Azure AI Foundry. For enterprises that operate across multiple cloud providers — or that have a primary AWS or Google Cloud commitment — this means Claude can be deployed without routing data through Anthropic’s own infrastructure, satisfying data residency, sovereignty, and compliance requirements that block many AI deployments in regulated industries. For more insights, explore how Claude is making waves in AI technology.

OpenAI’s Deep Integration With Microsoft Azure

OpenAI’s Azure integration through Azure OpenAI Service remains the gold standard for enterprises that are already committed to the Microsoft ecosystem. The integration includes private endpoints, virtual network support, role-based access control through Azure Active Directory, and compliance certifications including SOC 2, ISO 27001, and HIPAA eligibility. For Microsoft-first enterprise environments, the depth of this integration creates a deployment path for OpenAI models that is faster, more secure, and more governable than deploying through OpenAI’s direct API.

Enterprise Use Cases: Which Platform Fits Your Team?

The most useful way to approach this decision is not to ask which platform is better in the abstract — it is to map each platform’s strengths directly to the workflows your team needs to run. Both Claude and GPT-4o are exceptional models. The question is fit, not superiority. For insights on optimizing AI models for enterprise use, check out Mastering Token Efficiency.

The majority of enterprise teams that have deployed AI at scale are not running a single platform. They are running both, with clear internal guidelines about which model handles which category of task. That is not indecision — it is intelligent infrastructure design.

Here is a practical breakdown of where each platform consistently delivers stronger results in enterprise deployments:

Choose Claude for Legal Reviews, Codebase Audits, and Safety-Critical Work

Claude is the stronger choice when your workflow demands exhaustive document analysis, consistent behavioral guardrails, or deep reasoning across large volumes of structured and unstructured data. Legal teams use Claude to review entire contract libraries in a single context window. Engineering teams use it to audit codebases for security vulnerabilities, deprecated dependencies, and style inconsistencies across hundreds of files simultaneously. Compliance teams value Claude’s Constitutional AI training for its predictable, policy-aligned outputs in scenarios where a hallucinated or biased response carries real regulatory risk.

The behavioral consistency that comes from Anthropic’s Constitutional AI methodology also makes Claude the preferred choice for safety-critical deployments — situations where the cost of an unexpected, harmful, or misleading output is high enough that you need a model with a documented, auditable safety framework backing every response.

Choose OpenAI for Customer Support, Knowledge Management, and Multimodal Workflows

OpenAI is the stronger fit when your enterprise needs to handle high-volume, customer-facing interactions across multiple modalities. GPT-4o’s real-time voice processing makes it the natural choice for building AI-powered call center agents, interactive support bots, and voice-driven enterprise assistants. Knowledge management platforms that need to process images, parse scanned documents visually, and generate formatted summaries benefit from OpenAI’s broader input and output format support. If your team is building on top of Microsoft 365, Azure Cognitive Services, or Power Platform, GPT-4o’s native integration into that ecosystem creates the fastest path from prototype to production.

Why Most Enterprises Now Use Both

The majority of large enterprises running serious AI deployments are not locked into a single platform. The most common pattern is using Claude for internal, document-heavy, compliance-sensitive workflows — legal review, internal knowledge base analysis, developer tooling — while running GPT-4o for external-facing applications, creative content pipelines, and multimodal customer interactions. Running both platforms in parallel is not complexity for its own sake. It is the most rational response to a market where two world-class models have genuinely different, complementary strengths.

Safety Records and AI Governance: An Honest Comparison

Anthropic’s Constitutional AI framework gives it a structural advantage in enterprise AI governance conversations. Because Claude’s behavior is shaped by an explicit, documented set of principles rather than purely by human feedback, compliance teams have a cleaner audit trail to point to when explaining model behavior to regulators, legal counsel, or executive stakeholders. Anthropic also publishes detailed model cards and safety evaluation results, making it easier to assess deployment risk for specific use cases in regulated industries like healthcare, financial services, and legal.

OpenAI has significantly strengthened its safety documentation and enterprise governance controls over the past two years, including the introduction of usage policies, system-level content controls through the API, and dedicated enterprise compliance frameworks. However, OpenAI’s development philosophy has historically prioritized capability advancement alongside safety research, while Anthropic was founded explicitly on the premise that safety research should lead capability development. For enterprises where AI governance is a board-level concern — not just an engineering consideration — that philosophical difference carries real weight in vendor evaluation conversations.

The Verdict: OpenAI or Anthropic for Your Enterprise in 2026

OpenAI has broader reach, deeper multimodal support, and a cost-efficient budget tier that makes it the right default for high-volume, consumer-facing, and Microsoft-integrated enterprise deployments. If your workflows involve voice, video, image generation, or tight Azure integration, GPT-4o is your primary platform. Anthropic has stronger long-context performance, a more transparent safety framework, and consistently superior results on coding and complex document analysis tasks that make it the right choice for legal, compliance, and engineering-intensive workloads. Claude’s 1 million token context window alone solves a category of enterprise problem that GPT-4o cannot address today.

The most strategically sound answer for most enterprises in 2026 is not OpenAI or Anthropic — it is OpenAI and Anthropic, with clearly defined internal policies governing which platform handles which workflow category. The enterprises winning with AI right now are not debating which single model is universally better. They are building intelligent, multi-model architectures that route the right task to the right model every time. For insights on enhancing AI efficiency, explore mastering token efficiency in enterprise AI.

Frequently Asked Questions

Below are the most common questions enterprise technology teams ask when evaluating OpenAI and Anthropic Claude side by side.

Is Claude or ChatGPT better for enterprise software development?

Claude is generally the stronger performer for enterprise software development tasks. Claude 3.5 Sonnet has outperformed competing models on SWE-bench, a benchmark designed specifically around real-world software engineering challenges including multi-file edits, bug diagnosis, and code documentation. Its 1 million token context window allows engineering teams to load entire codebases into a single prompt, enabling more coherent analysis across large projects.

That said, GPT-4o is a capable coding assistant and benefits from a significantly larger library of community-generated prompting strategies, fine-tuning examples, and tooling integrations. Teams already embedded in GitHub Copilot, Azure DevOps, or Microsoft’s developer ecosystem will find GPT-4o integrates more naturally into their existing pipelines. For net-new development tooling with no legacy infrastructure constraints, Claude is the stronger technical choice. For teams already in the Microsoft stack, GPT-4o may be the more practical one.

Does Claude support image and video generation like OpenAI?

No. Claude currently supports text and image input — meaning it can analyze and describe images — but it does not support image generation, video generation, or voice output. OpenAI’s platform includes DALL-E for image generation, Advanced Voice Mode for real-time audio, and video frame understanding through GPT-4o.

For enterprises building workflows that require generating visual content, running voice agents, or processing video, OpenAI is the only viable choice between the two platforms today. Anthropic has not publicly announced a timeline for adding generative image or voice capabilities to the Claude model family.

Is Anthropic safer than OpenAI for enterprise deployments?

Anthropic has a more explicitly documented safety methodology through its Constitutional AI framework, which gives compliance and legal teams a clearer audit trail for model behavior in regulated industries. Anthropic’s founding mission centers safety research as the primary objective, not a parallel track to capability development. That philosophical difference translates into more predictable, policy-aligned model outputs — particularly important for healthcare, financial services, legal, and government enterprise deployments. For more insights into AI risks and challenges, you can read about OpenAI’s serious trouble risks.

OpenAI has made significant governance improvements and offers strong enterprise compliance controls through Azure OpenAI Service, including HIPAA eligibility, SOC 2 certification, and ISO 27001 compliance. Neither platform is unsafe for enterprise use. The distinction is that Anthropic’s safety framework is more transparent, more auditable, and more directly embedded in the model’s core training methodology — which matters most when you need to explain AI governance decisions to a regulator or a board.

Can enterprises use both OpenAI and Anthropic Claude at the same time?

Yes — and most large enterprises running serious AI deployments already do. The two platforms have complementary strengths, and building a multi-model architecture that routes document-heavy, compliance-sensitive, and code-intensive tasks to Claude while directing multimodal, customer-facing, and Microsoft-integrated workflows to GPT-4o is the most common pattern among mature enterprise AI adopters. Both platforms expose their models via API, making it straightforward to build orchestration layers — using tools like LangChain, LlamaIndex, or custom middleware — that dynamically route prompts based on task type, cost constraints, or compliance requirements.

Which platform is better for teams building on AWS?

For teams with a primary AWS commitment, Anthropic Claude is the more natural fit. Claude models are available natively through Amazon Bedrock, AWS’s managed foundation model service, with full support for private endpoints, VPC integration, AWS Identity and Access Management controls, and AWS compliance certifications.

This means AWS-first enterprises can deploy Claude at scale without routing sensitive data through external infrastructure, without renegotiating data processing agreements, and without stepping outside their existing cloud security perimeter. The Anthropic-AWS partnership is also backed by a multi-billion dollar investment from Amazon, signaling long-term infrastructure commitment and roadmap alignment between the two companies.

OpenAI models are also accessible on AWS through third-party integrations and marketplace listings, but the native managed service experience is significantly deeper with Claude on Bedrock. For AWS-native teams prioritizing operational simplicity, compliance, and cost consolidation under an existing AWS agreement, Claude on Bedrock is the most straightforward enterprise deployment path available today.

- Amazon Bedrock: Native Claude deployment with VPC support, IAM controls, and AWS compliance coverage

- Private Endpoints: Data stays within your AWS environment — no external routing required

- AWS Investment Backing: Amazon’s multi-billion dollar investment in Anthropic signals long-term infrastructure alignment

- Compliance Ready: Meets data residency and sovereignty requirements for regulated industries operating on AWS

- Cost Consolidation: Claude usage on Bedrock can be applied against existing AWS spend commitments

The practical implication is that AWS-committed enterprises do not have to choose between their cloud strategy and their AI model strategy. Claude on Bedrock lets you run a world-class large language model inside the infrastructure boundary you have already built, secured, and audited.

Ultimately, whether your team lands on Claude, GPT-4o, or a combination of both, the strategic imperative is the same: stop treating AI model selection as a one-time procurement decision and start treating it as an ongoing architectural discipline. The platforms are evolving rapidly, the capability gaps are shifting, and the enterprises that build the most adaptable, multi-model infrastructures today will be best positioned to integrate whatever comes next.